Build your first Neural Network in TensorFlow 2 | TensorFlow for Hackers (Part I)

— Deep Learning, Neural Networks, TensorFlow, Python — 4 min read

Share

TL;DR Build and train your first Neural Network model using TensorFlow 2. Use the model to recognize clothing type from images.

Ok, I’ll start with a secret — I am THE fashion wizard (as long as we’re talking tracksuits). Fortunately, there are ways to get help, even for someone like me!

Can you imagine a really helpful browser extension for “fashion accessibility”? Something that tells you what the type of clothing you’re looking at.

After all, I really need something like this. I found out nothing like this exists, without even searching for it. Let’s make a Neural Network that predicts clothing type from an image!

Here’s what we are going to do:

- Install TensorFlow 2

- Take a look at some fashion data

- Transform the data, so it is useful for us

- Create your first Neural Network in TensorFlow 2

- Predict what type of clothing is showing on images your Neural Network haven’t seen

Setup

With TensorFlow 2 just around the corner (not sure how far along that corner is thought) making your first Neural Network has never been easier (as far as TensorFlow goes).

But what is TensorFlow? Machine Learning platform (really Google?) created and open sourced by Google. Note that TensorFlow is not a special purpose library for creating Neural Networks, although it is primarily used for that purpose.

So, what TensorFlow 2 has in store for us?

TensorFlow 2.0 focuses on simplicity and ease of use, with updates like eager execution, intuitive higher-level APIs, and flexible model building on any platform

Alright, let’s check those claims and install TensorFlow 2 from your terminal:

1pip install tensorflow-gpu==2.0.0-alpha0Fashion data

Your Neural Network needs something to learn from. In Machine Learning that something is called datasets. The dataset for today is called Fashion MNIST.

Fashion-MNISTis a dataset of Zalando’s article images — consisting of a training set of 60,000 examples and a test set of 10,000 examples. Each example is a 28x28 grayscale image, associated with a label from 10 classes.

In other words, we have 70,000 images of 28 pixels width and 28 pixels height in greyscale. Each image is showing one of 10 possible clothing types. Here is one:

Here are some images from the dataset along with the clothing they are showing:

Here are all different types of clothing:

| Label | Description |

|---|---|

| 0 | T-shirt/top |

| 1 | Trouser |

| 2 | Pullover |

| 3 | Dress |

| 4 | Coat |

| 5 | Sandal |

| 6 | Shirt |

| 7 | Sneaker |

| 8 | Bag |

| 9 | Ankle boot |

Now that we got familiar with the data we have let’s make it usable for our Neural Network.

Data Preprocessing

Let’s start with loading our data into memory:

1import tensorflow as tf2from tensorflow import keras34(x_train, y_train), (x_val, y_val) = keras.datasets.fashion_mnist.load_data()Fortunately, TensorFlow has the dataset built-in, so we can easily obtain it.

Loading it gives us 4 things:

x_train — image (pixel) data for 60,000 clothes. Used for training our model.

y_train — classes (clothing type) for the clothing above. Used for training our model.

x_val — image (pixel) data for 10,000 clothes. Used for testing/validating our model.

y_val — classes (clothing type) for the clothing above. Used for testing/validating our model.

Now, your Neural Network can’t really see images as you do. But it can understand numbers. Each data point of each image in our dataset is pixel data — a number between 0 and 255. We would like that data to be transformed (Why? While the truth is more nuanced, one can say it helps with training a better model) in the range 0–1. How can we do it?

We will use the Dataset from TensorFlow to prepare our data:

1def preprocess(x, y):2 x = tf.cast(x, tf.float32) / 255.03 y = tf.cast(y, tf.int64)45 return x, y67def create_dataset(xs, ys, n_classes=10):8 ys = tf.one_hot(ys, depth=n_classes)9 return tf.data.Dataset.from_tensor_slices((xs, ys)) \10 .map(preprocess) \11 .shuffle(len(ys)) \12 .batch(128)Let’s unpack what is happening here. What does tf.one_hot do? Let’s say you have the following vector:

[1, 2, 3, 1]

Here is the one-hot encoded version of it:

1[2 [1, 0, 0],3 [0, 1, 0],4 [0, 0, 1],5 [1, 0, 0]6]It puts 1 at the index position of the number and 0 everywhere else.

We create Dataset from the data using from_tensor_slices and divide each pixel of the images by 255 to scale it in the 0–1 range.

Then we use shuffle and batch to convert the data into chunks.

Why shuffle the data, though? We don’t want our model to make predictions based on the order of the training data, so we just shuffle it.

I am truly sorry for that bad joke:

Create your first Neural Network

You’re doing great! It is time for the fun part, use the data to create your first Neural Network.

1train_dataset = create_dataset(x_train, y_train)2val_dataset = create_dataset(x_val, y_val)Build your Neural Network using Keras layers

They say TensorFlow 2 has an easy High-level API, let’s take it for a spin:

1model = keras.Sequential([2 keras.layers.Reshape(3 target_shape=(28 * 28,), input_shape=(28, 28)4 ),5 keras.layers.Dense(6 units=256, activation='relu'7 ),8 keras.layers.Dense(9 units=192, activation='relu'10 ),11 keras.layers.Dense(12 units=128, activation='relu'13 ),14 keras.layers.Dense(15 units=10, activation='softmax'16 )17])Turns out the High-level API is the old Keras API which is great.

Most Neural Networks are built by “stacking” layers. Think pancakes or lasagna. Your first Neural Network is really simple. It has 5 layers.

The first (Reshape) layer is called an input layer and takes care of converting the input data for the layers below. Our images are 28*28=784 pixels. We’re just converting the 2D 28x28 array to a 1D 784 array.

All other layers are Dense (interconnected). You might notice the parameter units, it sets the number of neurons for each layer. The activation parameter specifies a function that decides whether “the opinion” of a particular neuron, in the layer, should be taken into account and to what degree. There are a lot of activation functions one can use.

The last (output) layer is a special one. It has 10 neurons because we have 10 different types of clothing in our data. You get the predictions of the model from this layer.

Train your model

Right now your Neural Network is plain dumb. It is like a shell without a soul (good that you get that). Let’s train it using our data:

1model.compile(2 optimizer='adam',3 loss=tf.losses.CategoricalCrossentropy(from_logits=True),4 metrics=['accuracy']5)67history = model.fit(8 train_dataset.repeat(),9 epochs=10,10 steps_per_epoch=500,11 validation_data=val_dataset.repeat(),12 validation_steps=213)Training a Neural Network consists of deciding on objective measurement of accuracy and an algorithm that knows how to improve on that.

TensorFlow allows us to specify the optimizer algorithm we’re going to use — Adam and the measurement (loss function) — CategoricalCrossentropy (we’re choosing/classifying 10 different types of clothing). We’re measuring the accuracy of the model during the training, too!

The actual training takes place when the fit method is called. We give our training and validation data to it and specify how many epochs we’re training for. During one training epoch, all data is shown to the model.

Here is a sample result of our training:

1Epoch 1/10 500/500 [==============================] - 9s 18ms/step - loss: 1.7340 - accuracy: 0.7303 - val_loss: 1.6871 - val_accuracy: 0.78122Epoch 2/10 500/500 [==============================] - 6s 12ms/step - loss: 1.6806 - accuracy: 0.7807 - val_loss: 1.6795 - val_accuracy: 0.78123...I got ~82% accuracy on the validation set after 10 epochs. Lets profit from our model!

Making predictions

Now that your Neural Network “learned” something lets try it out:

1predictions = model.predict(val_dataset)Here is a sample prediction:

1array([2 1.8154810e-07,3 1.0657334e-09,4 9.9998713e-01,5 1.1928002e-05,6 2.9766360e-08,7 4.0670972e-08,8 2.5100772e-07,9 4.5147233e-11,10 2.9812568e-07,11 3.5224868e-1112], dtype=float32)Recall that we have 10 different clothing types. Our model outputs a probability distribution about how likely each clothing type is shown on an image. To make a decision, we can get the one with the highest probability:

1np.argmax(predictions[0])2

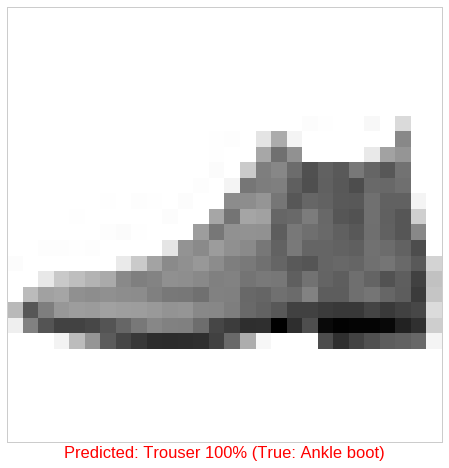

Here is one correct and one wrong prediction from our model:

Conclusion

Alright, you got your first Neural Network running and made some predictions! You can take a look at the Google Colaboratory Notebook (including more charts) here:

One day you might realize that your relationship with Machine Learning is similar to marriage. The problems you might encounter are similar, too! What Makes Marriages Work by John Gottman, Nan Silver lists 5 problems marriages have: “Money, Kids, Sex, Time, Others”. Here are the Machine Learning counterparts:

Shall we tackle them together?

Share

Want to be a Machine Learning expert?

You'll never get spam from me