Build a simple Neural Network with TensorFlow.js in JavaScript

— Deep Learning, Machine Learning, Neural Network, JavaScript — 4 min read

Share

TL;DR Learn the basics of operating Tensors. Build a 2 layer Deep Neural Network and train it using TensorFlow.js.

In the previous part, you learned how to build a Deep Neural Network and train it with Backpropagation from scratch. This time, you’ll use TensorFlow.js and see how much simpler things can get!

Run the complete source code on CodeSandbox

In this part, you’ll learn how to:

- Manipulate Tensors and understand their properties

- Do basic math operations on Tensors

- Build a Deep Neural Net and train it with TensorFlow.js sequential API

- Use your trained model to make predictions

First, let’s learn some Tensor Kung Fu:

The magic of Tensors

Tensors are the main building blocks of TensorFlow.js. They are n-dimensional data containers. You can think of them as multidimensional arrays in languages like PHP, JavaScript, and others. What that means is that you can use tensors as a scalar, vector, and matrix values, since they are a generalization of those.

Each Tensor contains the following properties

rank- number of dimensionsshape- size of each dimensiondtype- data type of the values

Let’s start by creating your first Tensor:

1import * as tf from "@tensorflow/tfjs"23const t = tf.tensor([1, 2, 3])Check it’s rank:

1console.log(t.rank)11That confirms that your Tensor is 1-dimensional. Let’s check the shape:

1console.log(t.shape)1[3]1-dimensional with 3 values. But how can you see the contents of this thing?

1console.log(t)1Tensor {kept: false, isDisposedInternal: false …}Not what you’ve expected, right? Tensors are custom objects and have a print() method that output their values:

1t.print()1Tensor2 [1, 2, 3]You can also get a flatten array of the data:

1console.log(t.dataSync())1Float32Array {0: 1, 1: 2, 2: 3}Of course, the values don’t have to be just numeric. You can create tensors of strings:

1const wordTensor = tf.tensor(["hello", "world"])2console.log(wordTensor.dataSync())1["hello", "world"]You can use tensor2d() to create matrices (or 2-dimensional tensors):

1const matrix = tf.tensor2d([2 [1, 2, 3],3 [4, 5, 6],4])5console.log(matrix.shape)1[2, 3]There are some utility methods that will be handy when we start developing models. Let’s start with ones():

1tf.ones([2, 2]).print()1Tensor2 [[1, 1],3 [1, 1]]You can use reshape() to change the dimensionality of a Tensor:

1tf.tensor([1, 2, 3, 4]).reshape([2, 2]).print()1Tensor2 [[1, 2],3 [3, 4]]Tensor math

Unfortunately, JavaScript doesn’t support operator overloading. This leaves us with a rather crappy interface for doing math operations. But what you’re going to do? Not math?

You can use tf.add() to do element-wise addition:

1const a = tf.tensor([1, 2, 3])2const b = tf.tensor([4, 5, 6])34a.add(b).print()1Tensor2 [5, 7, 9]Alternatively, you can rewrite this as:

1tf.add(a, b)You should already be familiar with taking the weighted sum of two tensors. Use tf.dot():

1const d1 = tf.tensor([2 [1, 2],3 [1, 2],4])56const d2 = tf.tensor([7 [3, 4],8 [3, 4],9])1011d1.dot(d2).print()1Tensor2 [[9, 12],3 [9, 12]]Finally, let’s have a look at transpose():

1tf.tensor([2 [1, 2],3 [3, 4],4])5 .transpose()6 .print()1Tensor2 [[1, 3],3 [2, 4]]You can think of the transpose as a flipped-axis version of the input Tensor.

Have a look at all arithmetic operations supported operations by TensorFlow.js.

Getting derivatives of a function

The real power of libraries like TensorFlow.js is that they can calculate derivatives of arbitrary functions. Think about it - you don’t have to implement the backward step of the Backpropagation algorithm.

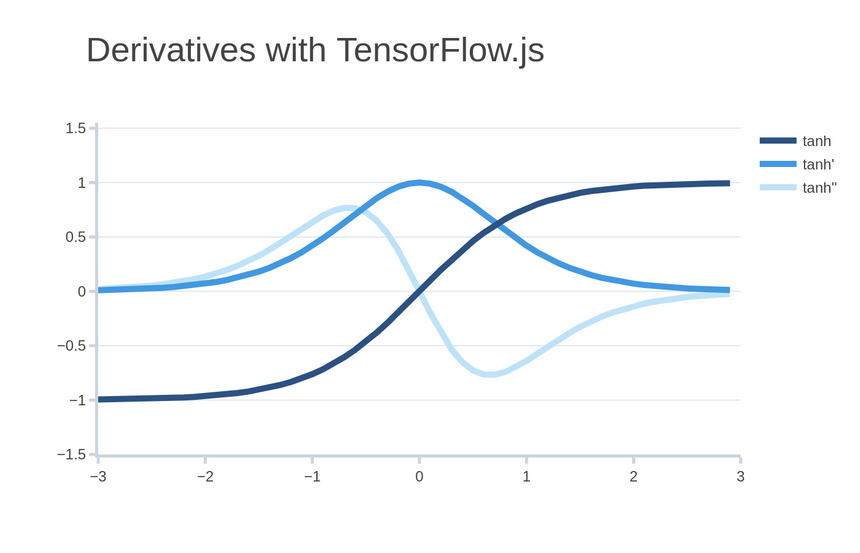

Here’s how you can make a function that takes the derivative of tanh():

1const tanh = x => tf.sinh(x).div(tf.cosh(x))23const tanhFirstDerivative = tf.grad(tanh)Wrapping your function using tf.grad() returns a function that calculates the gradient with respect to the parameter. You can get the second derivative using the same approach:

1const tanhSecondDerivative = tf.grad(tanhFirstDerivative)Here’s how all those functions look like:

Automatically finding derivatives is a set of techniques known as autodiff. Modern autodiff libraries are very efficient and are almost always hidden behind fancy APIs.

If you’re interested in finding more about the autodiff API in TensorFlow.js look at the gradient operations.

Training a Deep Neural Net

You’re still trying to predict the number of infections for tomorrow of a new virus. You have data for each of the previous days:

1const DATA = tf.tensor([2 // infections, infected countries3 [2.0, 1.0],4 [5.0, 1.0],5 [7.0, 4.0],6 [12.0, 5.0],7])89const nextDayInfections = tf.expandDims(tf.tensor([5.0, 7.0, 12.0, 19.0]), 1)Recall that building Neural Nets is usually done by stacking layers. Yes, just like a lasagne. TensorFlow.js provides a nice API to stack different types of layers:

1const HIDDEN_SIZE = 423const model = tf.sequential()45model.add(6 tf.layers.dense({7 inputShape: [DATA.shape[1]],8 units: HIDDEN_SIZE,9 activation: "tanh",10 })11)1213model.add(14 tf.layers.dense({15 units: HIDDEN_SIZE,16 activation: "tanh",17 })18)1920model.add(21 tf.layers.dense({22 units: 1,23 })24)This is the exact same model you’ve created in the previous part. It has 2 hidden layers and uses tanh() as an activation function. Visit the Models section to learn more about different types of models and layers.

You can a nice overview of your model using the summary() method:

1model.summary()1_________________________________________________________________2Layer (type) Output shape Param #3=================================================================4dense_Dense40 (Dense) [null,4] 125_________________________________________________________________6dense_Dense41 (Dense) [null,4] 207_________________________________________________________________8dense_Dense42 (Dense) [null,1] 59=================================================================10Total params: 3711Trainable params: 3712Non-trainable params: 013_________________________________________________________________The number of parameters is more than you expect. One of the reasons is that this model includes weight and bias values. For now, you can think of biases as another parameter that helps you get better models.

The next step is to compile the model:

1const ALPHA = 0.00123model.compile({4 optimizer: tf.train.sgd(ALPHA),5 loss: "meanSquaredError",6})This tells TensorFlow.js that we want to use Stochastic Gradient Descent (SGD) for optimization. The error will be measured using the metric we defined previously - mean squared error.

There are quite a lot of optimizers you can use. Which one is the best? Hard to tell, but use Adam 😉

1await model.fit(DATA, nextDayInfections, {2 epochs: 200,3 callbacks: {4 onEpochEnd: async (epoch, logs) => {5 if (epoch % 10 === 0) {6 console.log(`Epoch ${epoch}: error: ${logs.loss}`)7 }8 },9 },10})1Epoch 10: error: 124.219268798828122Epoch 20: error: 106.18200683593753Epoch 30: error: 92.694145202636724Epoch 40: error: 81.849433898925785...6Epoch 160: error: 33.460788726806647Epoch 170: error: 32.4902992248535168Epoch 180: error: 31.6765594482421889Epoch 190: error: 30.98721885681152310Epoch 200: error: 30.394424438476562The error keeps reducing throughout the whole training process. Looks like our model is learning something. Keep in mind that the data is really small and we can’t really expect fabulous predictions out of our model.

You can get the weight values that were found during the training process. For the first hidden layer we have:

1console.log(model.layers[0].getWeights()[0].shape)2model.layers[0].getWeights()[0].print()1[2, 4]2Tensor3 [[0.4767939 , 0.3428304, -0.5344698, 0.5965855 ],4 [-0.3558884, 0.1546917, -0.0205269, -0.2085788]]Recall that we have 2 neurons in the input layer and 4 in the first hidden layer (hence the 2×4 shape).

For the second hidden layer we have:

1console.log(model.layers[1].getWeights()[0].shape)2model.layers[1].getWeights()[0].print()1[4, 4]2Tensor3 [[0.0728809 , -0.9897094, -0.034509 , -1.0883898],4 [0.5236863 , -0.4294432, 0.4234778 , 0.1519209 ],5 [-0.2439377, -0.249658 , -0.8608022, 0.3538387 ],6 [0.4874444 , -1.0027372, 0.5247142 , -0.3581437]]4 hidden units in the previous layer and another 4 in this one, gives us a 4×4 shape.

Alright, you’ve seen the weight values, but do they mean something? Can you say that the first feature is more important than the second or vice-versa?

Unfortunately, the answer is NO. There is research to explore the interpretability/explainability of Neural Nets. That said, at the level of individual weights - you can’t say anything about what their value means.

Making predictions

The model you’ve created and trained has a nice API to make predictions about unseen values. Let’s ask our Neural Net what the future number of infections will be:

1const lastDayFeatures = tf.tensor([[12.0, 5.0]])2model.predict(lastDayFeatures).print()1Tensor2 [[9.5538235],]The prediction of 9 and a half infections seems reasonable, but the trend of the data is for that number to increase.

Pretty much any Machine Learning model gets better as the number of diverse examples in the dataset increases

Here is the complete source code:

1import * as tf from "@tensorflow/tfjs"23const DATA = tf.tensor([4 // infections, infected countries5 [2.0, 1.0],6 [5.0, 1.0],7 [7.0, 4.0],8 [12.0, 5.0],9])1011const nextDayInfections = tf.expandDims(tf.tensor([5.0, 7.0, 12.0, 19.0]), 1)1213const ALPHA = 0.00114const HIDDEN_SIZE = 41516const model = tf.sequential()1718model.add(19 tf.layers.dense({20 inputShape: [DATA.shape[1]],21 units: HIDDEN_SIZE,22 activation: "tanh",23 })24)2526model.add(27 tf.layers.dense({28 units: HIDDEN_SIZE,29 activation: "tanh",30 })31)3233model.add(34 tf.layers.dense({35 units: 1,36 })37)3839model.summary()4041const train = async () => {42 model.compile({ optimizer: tf.train.sgd(ALPHA), loss: "meanSquaredError" })43 await model.fit(DATA, nextDayInfections, {44 epochs: 200,45 callbacks: {46 onEpochEnd: async (epoch, logs) => {47 if ((epoch + 1) % 10 === 0) {48 console.log(`Epoch ${epoch + 1}: error: ${logs.loss}`)49 }50 },51 },52 })5354 console.log(model.layers[0].getWeights()[0].shape)55 model.layers[0].getWeights()[0].print()5657 console.log(model.layers[1].getWeights()[0].shape)58 model.layers[1].getWeights()[0].print()5960 const lastDayFeatures = tf.tensor([[12.0, 5.0]])61 model.predict(lastDayFeatures).print()62}6364if (document.readyState !== "loading") {65 train()66} else {67 document.addEventListener("DOMContentLoaded", train)68}Summary

And this is how you build Deep Neural Nets with TensorFlow.js. It is much simpler than building everything from scratch! But it can also be much more confusing just calling some APIs and not knowing what the crack you’re doing!

Run the complete source code on CodeSandbox

You learned how to:

- Manipulate Tensors and understand their properties

- Do basic math operations on Tensors

- Build a Deep Neural Net and train it with TensorFlow.js sequential API

- Use your trained model to make predictions

You’re all done! Well, not quite. We still have to explore how to deal with images, texts, and time-series data with Neural Nets. You ready?

References

Share

Want to be a Machine Learning expert?

You'll never get spam from me