Build Your First Neural Network with PyTorch

— Deep Learning, PyTorch, Machine Learning, Neural Network, Classification, Python — 6 min read

Share

TL;DR Build a model that predicts whether or not is going to rain tomorrow using real-world weather data. Learn how to train and evaluate your model.

In this tutorial, you’ll build your first Neural Network using PyTorch. You’ll use it to predict whether or not is going to rain tomorrow using real weather information.

- Run the complete notebook in your browser (Google Colab)

- Read the Getting Things Done with Pytorch book

You’ll learn how to:

- Preprocess CSV files and convert the data to Tensors

- Build your own Neural Network model with PyTorch

- Use a loss function and an optimizer to train your model

- Evaluate your model and learn about the perils of imbalanced classification

1%reload_ext watermark2%watermark -v -p numpy,pandas,torch1CPython 3.6.92IPython 5.5.034numpy 1.17.55pandas 0.25.36torch 1.4.01import torch23import os4import numpy as np5import pandas as pd6from tqdm import tqdm7import seaborn as sns8from pylab import rcParams9import matplotlib.pyplot as plt10from matplotlib import rc11from sklearn.model_selection import train_test_split12from sklearn.metrics import confusion_matrix, classification_report1314from torch import nn, optim1516import torch.nn.functional as F1718%matplotlib inline19%config InlineBackend.figure_format='retina'2021sns.set(style='whitegrid', palette='muted', font_scale=1.2)2223HAPPY_COLORS_PALETTE =\24["#01BEFE", "#FFDD00", "#FF7D00", "#FF006D", "#93D30C", "#8F00FF"]2526sns.set_palette(sns.color_palette(HAPPY_COLORS_PALETTE))2728rcParams['figure.figsize'] = 12, 82930RANDOM_SEED = 4231np.random.seed(RANDOM_SEED)32torch.manual_seed(RANDOM_SEED)Data

Our dataset contains daily weather information from multiple Australian weather stations. We’re about to answer a simple question. Will it rain tomorrow?

The data is hosted on Kaggle and created by Joe Young. I’ve uploaded the dataset to Google Drive. Let’s get it:

1!gdown --id 1Q1wUptbNDYdfizk5abhmoFxIQiX19Tn7And load it into a data frame:

1df = pd.read_csv('weatherAUS.csv')We have a large set of features/columns here. You might also notice some NaNs. Let’s have a look at the overall dataset size:

1df.shape1(142193, 24)Looks like we have plenty of data. But we got to do something about those missing values.

Data Preprocessing

We’ll simplify the problem by removing most of the data (mo money mo problems - Michael Scott). We’ll use only 4 columns for predicting whether or not is going to rain tomorrow:

1cols = ['Rainfall', 'Humidity3pm', 'Pressure9am', 'RainToday', 'RainTomorrow']23df = df[cols]Neural Networks don’t work with much else than numbers. We’ll convert yes and no to 1 and 0, respectively:

1df['RainToday'].replace({'No': 0, 'Yes': 1}, inplace = True)2df['RainTomorrow'].replace({'No': 0, 'Yes': 1}, inplace = True)Let’s drop the rows with missing values. There are better ways to do this, but we’ll keep it simple:

1df = df.dropna(how='any')Finally, we have a dataset we can work with.

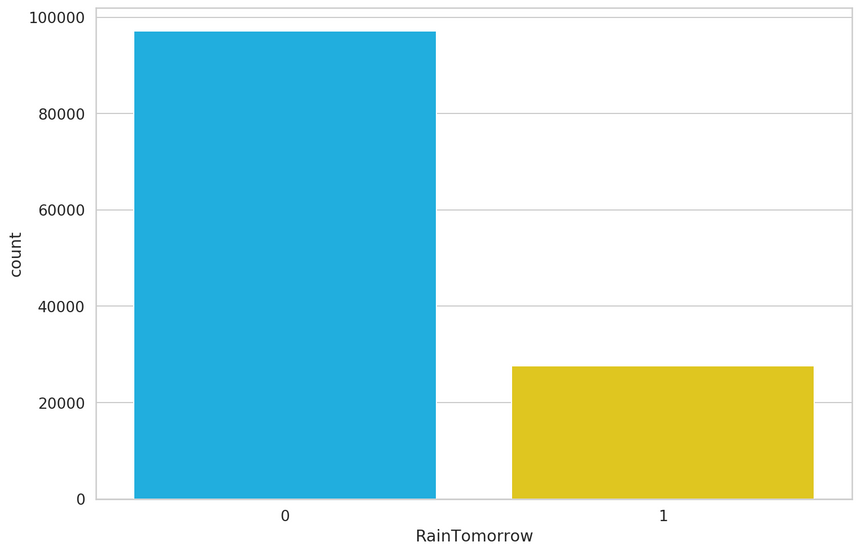

One important question we should answer is - How balanced is our dataset? Or How many times did it rain or not rain tomorrow?:

1sns.countplot(df.RainTomorrow);

1df.RainTomorrow.value_counts() / df.shape[0]10 0.77876221 0.2212383Name: RainTomorrow, dtype: float64Things are not looking good. About 78% of the data points have a non-rainy day for tomorrow. This means that a model that predicts there will be no rain tomorrow will be correct about 78% of the time.

You can read and apply the Practical Guide to Handling Imbalanced Datasets if you want to mitigate this issue. Here, we’ll just hope for the best.

The final step is to split the data into train and test sets:

1X = df[['Rainfall', 'Humidity3pm', 'RainToday', 'Pressure9am']]2y = df[['RainTomorrow']]34X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=RANDOM_SEED)And convert all of it to Tensors (so we can use it with PyTorch):

1X_train = torch.from_numpy(X_train.to_numpy()).float()2y_train = torch.squeeze(torch.from_numpy(y_train.to_numpy()).float())34X_test = torch.from_numpy(X_test.to_numpy()).float()5y_test = torch.squeeze(torch.from_numpy(y_test.to_numpy()).float())67print(X_train.shape, y_train.shape)8print(X_test.shape, y_test.shape)1torch.Size([99751, 4]) torch.Size([99751])2torch.Size([24938, 4]) torch.Size([24938])Building a Neural Network

We’ll build a simple Neural Network (NN) that tries to predicts will it rain tomorrow.

Our input contains data from the four columns: Rainfall, Humidity3pm, RainToday, Pressure9am. We’ll create an appropriate input layer for that.

The output will be a number between 0 and 1, representing how likely (our model thinks) it is going to rain tomorrow. The prediction will be given to us by the final (output) layer of the network.

We’ll add two (hidden) layers between the input and output layers. The parameters (neurons) of those layer will decide the final output. All layers will be fully-connected.

One easy way to build the NN with PyTorch is to create a class that inherits from torch.nn.Module:

1class Net(nn.Module):23 def __init__(self, n_features):4 super(Net, self).__init__()5 self.fc1 = nn.Linear(n_features, 5)6 self.fc2 = nn.Linear(5, 3)7 self.fc3 = nn.Linear(3, 1)89 def forward(self, x):10 x = F.relu(self.fc1(x))11 x = F.relu(self.fc2(x))12 return torch.sigmoid(self.fc3(x))1net = Net(X_train.shape[1])We start by creating the layers of our model in the constructor. The forward() method is where the magic happens. It accepts the input x and allows it to flow through each layer.

There is a corresponding backward pass (defined for you by PyTorch) that allows the model to learn from the errors that is currently making.

Activation Functions

You might notice the calls to F.relu and torch.sigmoid. Why do we need those?

One of the cool features of Neural Networks is that they can approximate non-linear functions. In fact, it is proven that they can approximate any function.

Good luck approximating non-linear functions by stacking linear layers, though. Activation functions allow you to break from the linear world and learn (hopefully) more. You’ll usually find them applied to an output of some layer.

Those functions must be hard to define, right?

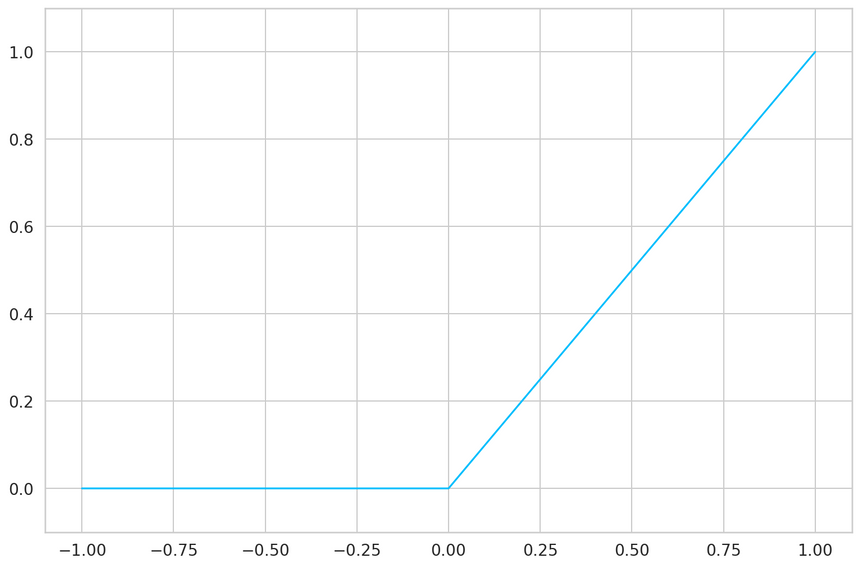

ReLU

Not at all, let start with the ReLU definition (one of the most widely used activation function):

ReLU(x)=max(0,x)Easy peasy, the result is the maximum value of zero and the input:

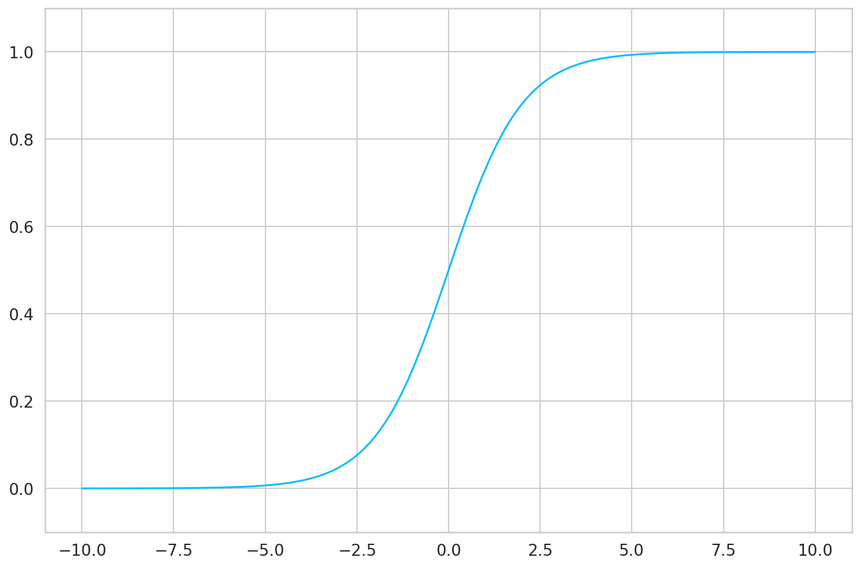

Sigmoid

The sigmoid is useful when you need to make a binary decision/classification (answering with a yes or a no).

It is defined as:

Sigmoid(x)=1+e−x1The sigmoid squishes the input values between 0 and 1. But in a super kind of way:

Training

With the model in place, we need to find parameters that predict will it rain tomorrow. First, we need something to tell us how good we’re currently doing:

1criterion = nn.BCELoss()The BCELoss is a loss function that measures the difference between two binary vectors. In our case, the predictions of our model and the real values. It expects the values to be outputed by the sigmoid function. The closer this value gets to 0, the better your model should be.

But how do we find parameters that minimize the loss function?

Optimization

Imagine that each parameter of our NN is a knob. The optimizer’s job is to find the perfect positions for each knob so that the loss gets close to 0.

Real-world models can contain millions or even billions of parameters. With so many knobs to turn, it would be nice to have an efficient optimizer that quickly finds solutions.

Contrary to what you might believe, optimization in Deep Learning is just satisfying. In practice, you’re content with good enough parameter values that give you an acceptable accuracy.

While there are tons of optimizers you can choose from, Adam is a safe first choice. PyTorch has a well-debugged implementation you can use:

1optimizer = optim.Adam(net.parameters(), lr=0.001)Naturally, the optimizer requires the parameters. The second argument lr is learning rate. It is a tradeoff between how good parameters you’re going to find and how fast you’ll get there. Finding good values for this can be black magic and a lot of brute-force “experimentation”.

Doing it on the GPU

Doing massively parallel computations on GPUs is one of the enablers for modern Deep Learning. You’ll need nVIDIA GPU for that.

PyTorch makes it really easy to transfer all the computation to your GPU:

1device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")1X_train = X_train.to(device)2y_train = y_train.to(device)34X_test = X_test.to(device)5y_test = y_test.to(device)1net = net.to(device)23criterion = criterion.to(device)We start by checking whether or not a CUDA device is available. Then, we transfer all training and test data to that device. Finally, we move our model and loss function.

Weather Forecasting

Having a loss function is great, but tracking the accuracy of our model is something easier to understand, for us mere mortals. Here’s the definition for our accuracy:

1def calculate_accuracy(y_true, y_pred):2 predicted = y_pred.ge(.5).view(-1)3 return (y_true == predicted).sum().float() / len(y_true)We convert every value below 0.5 to 0. Otherwise, we set it to 1. Finally, we calculate the percentage of correct values.

With all the pieces of the puzzle in place, we can start training our model:

1def round_tensor(t, decimal_places=3):2 return round(t.item(), decimal_places)34for epoch in range(1000):56 y_pred = net(X_train)78 y_pred = torch.squeeze(y_pred)9 train_loss = criterion(y_pred, y_train)1011 if epoch % 100 == 0:12 train_acc = calculate_accuracy(y_train, y_pred)1314 y_test_pred = net(X_test)15 y_test_pred = torch.squeeze(y_test_pred)1617 test_loss = criterion(y_test_pred, y_test)1819 test_acc = calculate_accuracy(y_test, y_test_pred)20 print(21f'''epoch {epoch}22Train set - loss: {round_tensor(train_loss)}, accuracy: {round_tensor(train_acc)}23Test set - loss: {round_tensor(test_loss)}, accuracy: {round_tensor(test_acc)}24''')2526 optimizer.zero_grad()2728 train_loss.backward()2930 optimizer.step()1epoch 02Train set - loss: 2.513, accuracy: 0.7793Test set - loss: 2.517, accuracy: 0.77845epoch 1006Train set - loss: 0.457, accuracy: 0.7927Test set - loss: 0.458, accuracy: 0.79389epoch 20010Train set - loss: 0.435, accuracy: 0.80111Test set - loss: 0.436, accuracy: 0.81213epoch 30014Train set - loss: 0.421, accuracy: 0.81415Test set - loss: 0.421, accuracy: 0.8151617epoch 40018Train set - loss: 0.412, accuracy: 0.82619Test set - loss: 0.413, accuracy: 0.8272021epoch 50022Train set - loss: 0.408, accuracy: 0.83123Test set - loss: 0.408, accuracy: 0.8322425epoch 60026Train set - loss: 0.406, accuracy: 0.83327Test set - loss: 0.406, accuracy: 0.8352829epoch 70030Train set - loss: 0.405, accuracy: 0.83431Test set - loss: 0.405, accuracy: 0.8353233epoch 80034Train set - loss: 0.404, accuracy: 0.83435Test set - loss: 0.404, accuracy: 0.8353637epoch 90038Train set - loss: 0.404, accuracy: 0.83439Test set - loss: 0.404, accuracy: 0.836During the training, we show our model the data for 10,000 times. Each time we measure the loss, propagate the errors trough our model and asking the optimizer to find better parameters.

The zero_grad() method clears up the accumulated gradients, which the optimizer uses to find better parameters.

What about that accuracy? 83.6% accuracy on the test set sounds reasonable, right? Well, I am about to disappoint you. But first, let’s learn how to save and load our trained models.

Saving the model

Training a good model can take a lot of time. And I mean weeks, months or even years. So, let’s make sure that you know how you can save your precious work. Saving is easy:

1MODEL_PATH = 'model.pth'23torch.save(net, MODEL_PATH)Restoring your model is easy too:

1net = torch.load(MODEL_PATH)Evaluation

Wouldn’t it be perfect to know about all the errors your model can make? Of course, that’s impossible. But you can get an estimate.

Using just accuracy wouldn’t be a good way to do it. Recall that our data contains mostly no rain examples.

One way to delve a bit deeper into your model performance is to assess the precision and recall for each class. In our case, that will be no rain and rain:

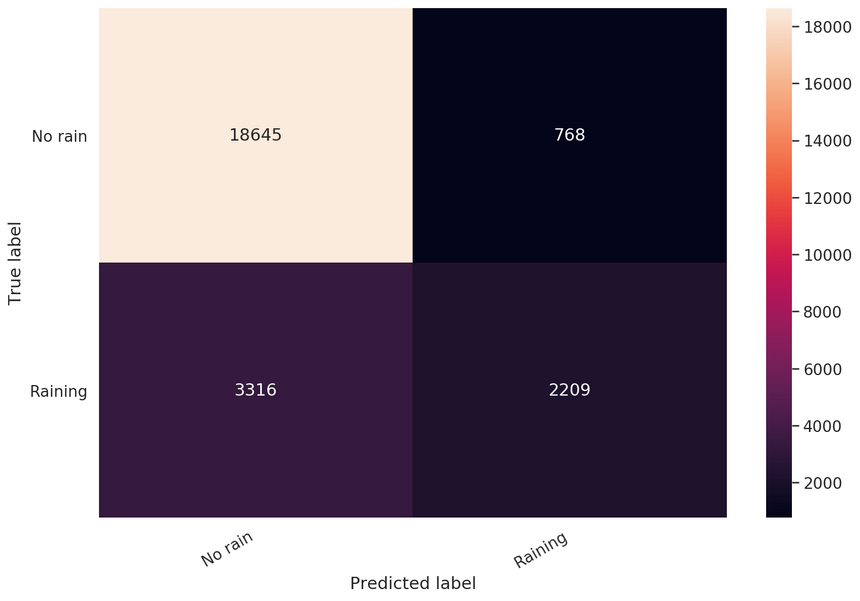

1classes = ['No rain', 'Raining']23y_pred = net(X_test)45y_pred = y_pred.ge(.5).view(-1).cpu()6y_test = y_test.cpu()78print(classification_report(y_test, y_pred, target_names=classes))1precision recall f1-score support23 No rain 0.85 0.96 0.90 194134 Raining 0.74 0.40 0.52 552556 accuracy 0.84 249387 macro avg 0.80 0.68 0.71 249388weighted avg 0.83 0.84 0.82 24938A maximum precision of 1 indicates that the model is perfect at identifying only relevant examples. A maximum recall of 1 indicates that our model can find all relevant examples in the dataset for this class.

You can see that our model is doing good when it comes to the No rain class. We have so many examples. Unfortunately, we can’t really trust predictions of the Raining class.

One of the best things about binary classification is that you can have a good look at a simple confusion matrix:

1cm = confusion_matrix(y_test, y_pred)2df_cm = pd.DataFrame(cm, index=classes, columns=classes)34hmap = sns.heatmap(df_cm, annot=True, fmt="d")5hmap.yaxis.set_ticklabels(hmap.yaxis.get_ticklabels(), rotation=0, ha='right')6hmap.xaxis.set_ticklabels(hmap.xaxis.get_ticklabels(), rotation=30, ha='right')7plt.ylabel('True label')8plt.xlabel('Predicted label');

You can clearly see that our model shouldn’t be trusted when it says it’s going to rain.

Making Predictions

Let’s pick our model’s brain and try it out on some hypothetical examples:

1def will_it_rain(rainfall, humidity, rain_today, pressure):2 t = torch.as_tensor([rainfall, humidity, rain_today, pressure]) \3 .float() \4 .to(device)5 output = net(t)6 return output.ge(0.5).item()This little helper will return a binary response based on your model predictions. Let’s try it out:

1will_it_rain(rainfall=10, humidity=10, rain_today=True, pressure=2)1True1will_it_rain(rainfall=0, humidity=1, rain_today=False, pressure=100)1FalseOkay, we got two different responses based on some parameters (yep, the power of the brute force). Your model is ready for deployment (but please don’t)!

Conclusion

Well done! You now have a Neural Network that can predict the weather. Well, sort of. Building well-performing models is hard, really hard. But there are tricks you’ll pick up along the way and (hopefully) get better at your craft!

- Run the complete notebook in your browser (Google Colab)

- Read the Getting Things Done with Pytorch book

You learned how to:

- Preprocess CSV files and convert the data to Tensors

- Build your own Neural Network model with PyTorch

- Use a loss function and an optimizer to train your model

- Evaluate your model and learn about the perils of imbalanced classification

References

Share

Want to be a Machine Learning expert?

You'll never get spam from me